Neuromorphic Engineering

Neuromorphic Engineering is relatively a young interdisciplinary of computer science. It takes its revelation from biology to model and simulate the function of neural/sensory system of a brain into a physical circuit. Neuromorphic engineering is based majorly on analog signals supported by digital technology. The goal of this field is to create a mechanism that can learn from very few inputs and be trained to recognize pattern to a very extensive scale that no artificial neural network would easily be able to. A key aspect of neuromorphic engineering is understanding how the morphology of individual neurons, circuits, applications, and overall architectures creates desirable computations, affects how information is represented, influences robustness to damage, incorporates learning and development, adapts to local change (plasticity), and facilitates evolutionary change.

MIT’s Artificial Brain on a Chip

Recently the engineers at Massachusetts Institute of Technology disclosed a completely trivializing Neuromorphic Chip. The chip is small as a confetto and covered with artificial synapsis.

Synapsis are responsible for transmitting signals between neurons in nervous system. An Artificial Synapsis tries to mimic this biological signaling to attain brain like computing and autonomous learning in edge computers and neuromorphic computing. This artificial synapsis is made of memristors. A memristor is an electrical component that limits or regulates the flow of electrical current in a circuit and remembers the amount of charge that has previously flowed through it. Memristors are important because they are non-volatile, meaning that they retain memory without power. The memristors driven artificial synapsis is cardinal because they enable the Neuromorphic Chips to function like a brain whilst being highly energy efficient to run Artificial Intelligence. This biological emulation can give rise to next generation cognitive abilities in artificial neural networks.

The Drill…

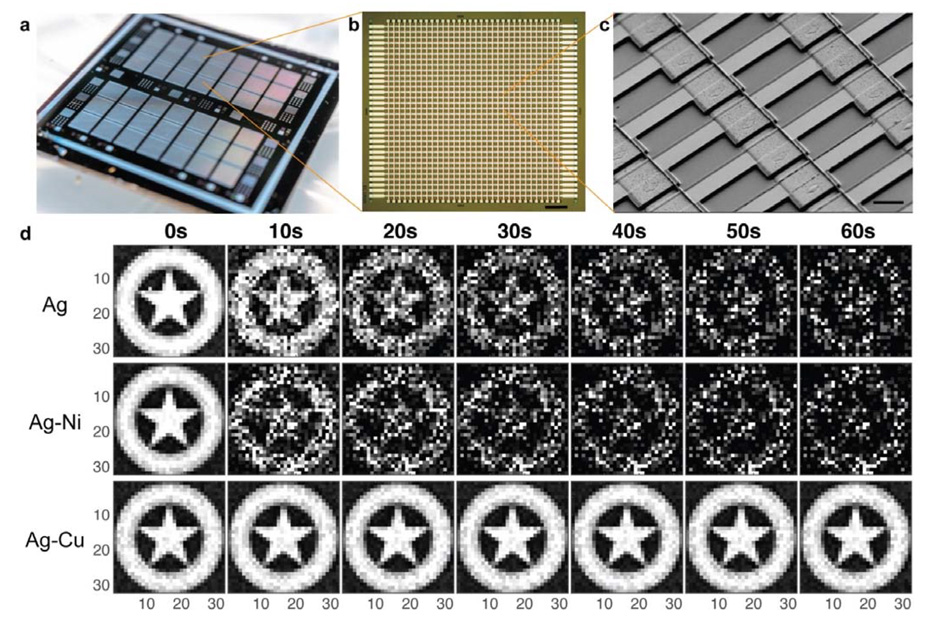

Back to the research, the MIT researchers made a new chip formed with thousands of memristors. They went on to fabricate the old memristors with the alloys of silver and copper, along with silicon.

The main problem of the memristors is that their performance drops down to a significant level when it is minimized in size. A memristor, artificial synapse responsible for signaling, consists of two electrodes – silver and silicon with a channel between them. The channel supports the flow of ions that represent signals. When the size of the chip is minimized, the channel between the electrodes is diminished and thus the signals are affected thus resulting the memristors to be less valid and reliable.

However, to overcome this, the engineers applied a new copper layer between the negative silicon electrode and positive silver electrode. Thus, making the applied copper layer to act as a stabilizing bridge. This enabled them to pattern the silicon chip to fit tens of thousands of memristors into a millimeter square area. Thereby, the above problem of memristors being less reliable when minimized in size was accounted.

The Performance…

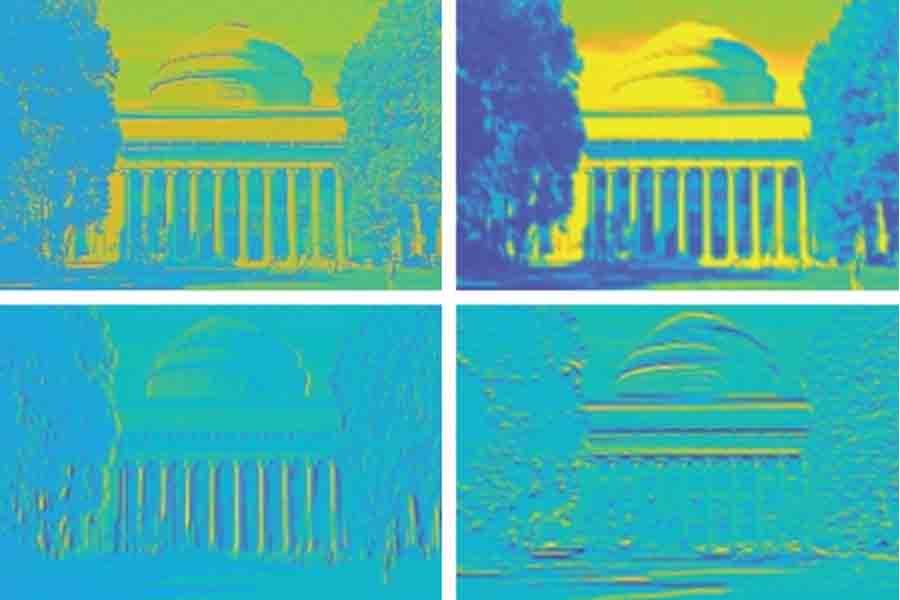

When the new chip was pervaded to several visual tasks, it was able to remember the stored images and reproduce them into more clear and crispier versions than the old memristors did.

In their first test, engineers recreated a gray-scale image of Captain America’s shield. The memristor was able to remember the image and reproduce it several times. Each memristor was able to match its corresponding pixel. It was then able to recreate the same clear and crispier image from its memory.

Each performance of other memristors design is compared with the new designed memristors chip is shown below.

It is also said that the newly designed chip was able to surpass standard memristor chips in terms of processing and performance. It is also said to be capable of image processing like sharpening and blurring (after it was subjected to alter an image of MIT’s Killian Court, in several specific ways).

Some Words…

If this technology can evolve, it can bring a revolution in the age of computing. The scientists envision memristors requiring relatively less chip real estate, creating more powerful, portable and energy efficient neuromorphic chips that won’t even need WIFI for real time processing replacing the huge supercomputers or internet or the cloud for processing A.I. It can uplift edge computing to a next verge, for example- “Imagine connecting a neuromorphic device to a camera on your car, and having it recognize lights and objects and make a decision immediately, without having to connect to the internet,” said Jeehwan Kim (associate professor of mechanical engineering at MIT). There are lots of hope, but this technology is still far, far away from being commercialized for it need to be well researched, developed and made hugely reliable before actually summoning the responsibility over.

Tech enthusiast and a movie fanatic.

How To Remove Bloatware From Any Xiaomi Devices (Without Root): Easiest Way!

iPhone 12 Pro, Max Specification Breakdown: The pick or skip dilemma?

The Minimalist Setup for Android Devices

How to Install and Uninstall Kernels in Android – Custom Kernels

Realme Watch 2 Launched: A Worthy Upgrade?

iQoo 7 Launched: Price, Specifications & Launch Date in India

Mi 11 Ultra: Into The Reckoning !!!

Surface Laptop Go Launched in India: The Most Affordable Surface!